AI tokens are emerging as a kind of currency that will help in recruitment, budgeting and productivity, Nvidia’s CEO Jensen Huang said during a keynote address at the company’s GTC conference. (The show runs through Thursday, March 19 in San Jose, CA.)

AI tokens are emerging as a kind of currency that will help in recruitment, budgeting and productivity, Nvidia’s CEO Jensen Huang said during a keynote address at the company’s GTC conference. (The show runs through Thursday, March 19 in San Jose, CA.)AI tokens will also increasingly influence the progress and bottom line of companies, Huang said. “Tokens are the new commodity,” he said, later adding that “computing used to be retrieval-based, now it’s generative.”

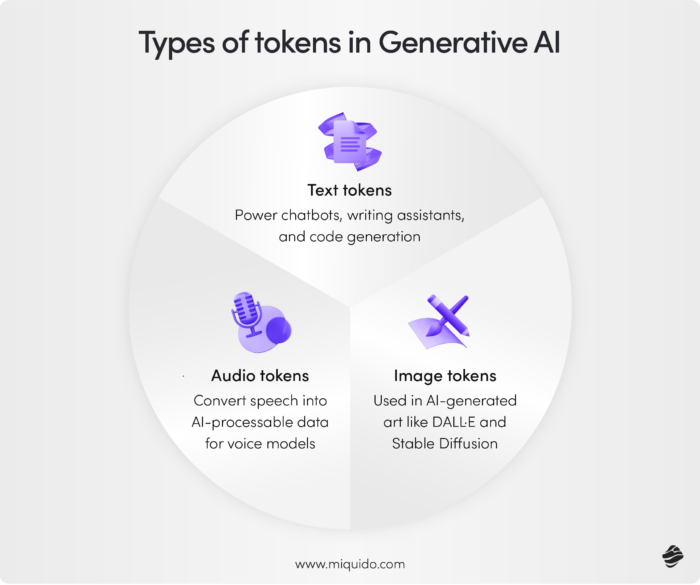

Tokens are central to modern AI computing, much like bits were a unit for CPU-based conventional computing. AI is being integrated into most new software products.

“This concept… of fusing structured data and generative AI will repeat itself in one industry after another,” Huang said.

Tokens are also becoming a fundamental component as AI implementations drive up company revenues. “If they could just get more capacity, they could generate more tokens, their revenues would go up,” Huang said.

Cloud providers offer pricing plans that charge based on AI tokens, particularly for text-based models. Video-based models are typically not token-based and are priced either per job or based on GPU usage time.

Huang said he could envision tokens being offered as a perk to developers, who will need the units to improve productivity. “I could totally imagine in the future every single engineer in our company will need an annual token budget,” Huang said.

The base pay of engineers will be a few hundred thousand dollars a year, and “I’m going to give them probably half of that on top of it as tokens so that they could be amplified 10x,” Huang said.

How many tokens come with the job is already a recruiting tool in Silicon Valley. “And the reason for that is very clear — because every engineer that has access to tokens will be more productive,” Huang said.

The demand for tokens is unprecedented, which is keeping prices high. But the cost should start to level off or drop as new technologies ramp up.

Explore related questions

AT GTC, Huang introduced a host of new technologies, including new GPUs called Rubin and CPUs called Vera. Nvidia has fused them with a new inference chip from Groq.

“We’re going to take our token generation rate from 22 million to 700 million—a 350 times increase,” Huang said.

The chips fit into what Nvidia calls AI factories, which generate tokens that help companies implement AI plans. “AI factory revenues are equal to tokens-per-watt. With power constraints, every unused watt is revenue lost,” Huang said.

So far at GTC, most of Nvidia’s token messaging has been around inference rather than training, but inferencing won’t involve tokens costing “gigabucks,” said Jack Gold, principal analyst at J. Gold Associates.

Nvidia claims Vera Rubin will cut computing costs even as system prices go up, but that claim isn’t as simple as it appears on the surface, Gold said.

Nvidia is already well-established in training, which leaves room for inferencing as a key growth area for the chip maker. The cost of generating tokens in inferencing is expected to be lower.

“Inference is cost-sensitive, just like cloud hosting,” Gold said. “Even though systems are expensive, we can enable you to generate lots more tokens— and hence more revenue… [That] is a critical message for them going forward.”

Beyond data centers, Nvidia is also bringing token-generation on-premise. That move dovetails with the release of desktop AI PCs like Dell’s Pro Max GB300(https://paserbyp.dreamwidth.org/828244.html), which uses Nvidia’s data-center GPUs, making it the most powerful AI PC to date.

“Customers now are really having that realization of the cost of a token in the cloud. And they’re now looking for more cost-effective options to invest in infrastructure, including on premises,” Charlie Walker, head of product at Dell, said in a briefing.

Huang in his speech also highlighted OpenClaw, the open framework for building AI agents, and introduced NemoClaw, Nvidia’s enterprise-grade platform based on that technology.

OpenClaw helps AI agents interact and coordinate tasks, which can enable long-running workflows. That can generate a lot of tokens.

“The OpenClaw event cannot be understated. This is as big of a deal as HTML. This is as big of a deal as Linux. We have now a world-class open agentic framework that all of us could use to build our OpenClaw strategy,” Huang said.

Why rely on a data center when you can run full-fledged AI models — typically found in the cloud — on your desktop? That’s the argument Dell is making with its new PCs, one of which has a data-center class GPU and can run AI models with a trillion parameters.

Why rely on a data center when you can run full-fledged AI models — typically found in the cloud — on your desktop? That’s the argument Dell is making with its new PCs, one of which has a data-center class GPU and can run AI models with a trillion parameters.