Communism by Elon Musk?

Apr. 28th, 2026 08:13 amWhile the Tesla and SpaceX CEO admitted he’s “more optimistic” than most, he insisted people shouldn’t stress over building a nest egg for the distant future, contrary to the staid advice of nearly all other financial professionals.

“Don’t worry about squirreling money away for retirement in 10 or 20 years,” said the world’s richest man on the Moonshots with Peter Diamandis podcast(https://youtu.be/RSNuB9pj9P8?si=WdjwfK_eMoRjkiOV) in January. “It won’t matter.”

Part of Musk’s controversial take is based on his vision of a world transformed by rapidly improving AI, robotics, and energy technology.

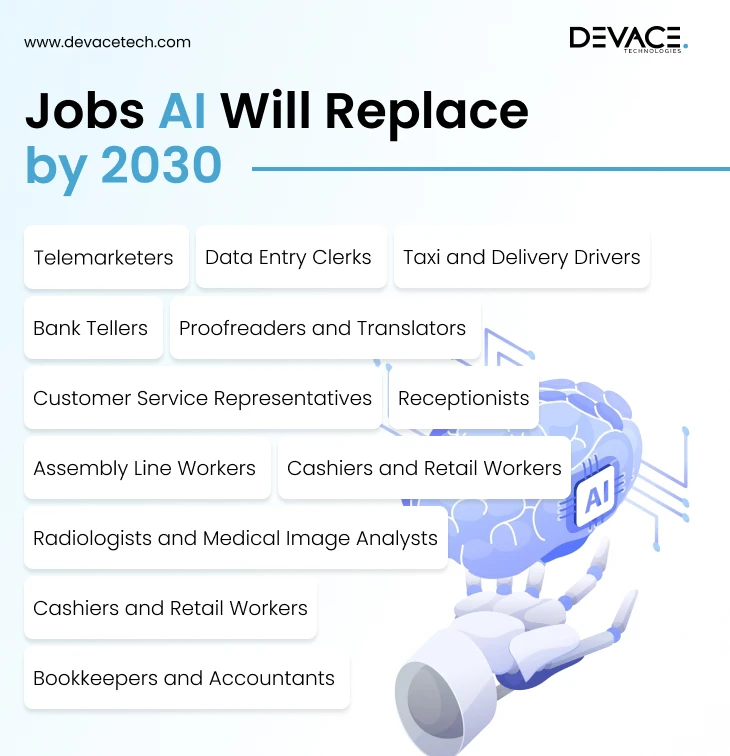

By 2030, AI will surpass “the intelligence of all humans combined,” Musk predicted. He also claimed eventually there will be more humanoid robots than humans on Earth. Slowly, the traditional job will be replaced as well, with white collar positions first on the list.

“Anything short of shaping atoms, AI can do probably half or more of those jobs right now,” he said.

The advances could lead to such big productivity increases, he said, that they will surpass “what people possibly could think of as abundance.”

Rather than a universal income, everyone will enjoy a “universal ‘you can have whatever you want’ income” in the future, he claimed. In this world that Musk foresees, the link between individual wages, savings, and living standards will no longer make sense.

Even without savings, AI will help people obtain better medical care than what is currently available within five years. It will also remove any limit on the availability of goods, services, or educational opportunities.

Musk’s comments build on his earlier claims that AI and humanoid robots will make work “optional” within 10 to 20 years and render money itself irrelevant. Musk previously compared the future of work to leisure activities like playing sports or video games rather than a survival necessity.

“If you want to work, [it’s] the same way you can go to the store and just buy some vegetables, or you can grow vegetables in your backyard. It’s much harder to grow vegetables in your backyard, and some people still do it because they like growing vegetables,” Musk said during the U.S.-Saudi Investment Forum in November(https://fortune.com/2026/01/19/when-does-elon-musk-say-work-will-be-optional-and-money-will-be-irrelevant-ai-robotics).

To be sure, Musk’s predictions about the future come at a time where many Americans are struggling to save. In part due to persistent inflation and weak wage growth, only 55% of American adults said they had a “rainy day” fund equal to three months of expenses saved up for an emergency, down from a high of 59% in 2021, according to a survey by the Federal Reserve. Fewer than half of those surveyed said they could cover an expense of $2,000 or more with their savings.

Surveys also consistently show a large share of Americans are behind on retirement savings or have little to nothing set aside for their post-work life.

Musk is also not blind to the potential downsides of a society where people don’t need to earn a living. A high universal income could come hand-in-hand with social unrest, he warned, as people may face a deeper crisis of meaning.

“If you actually get all the stuff you want, is that actually the future you want? Because it means that your job won’t matter,” Musk said.

Apple said yesterday that longtime CEO Tim Cook will step down as CEO later this year and transition to executive chairman of the board. As many on the Street expected, he will be succeeded by John Ternus, currently Apple's senior vice president of hardware engineering. Ternus will take the helm on Sept. 1.

Apple said yesterday that longtime CEO Tim Cook will step down as CEO later this year and transition to executive chairman of the board. As many on the Street expected, he will be succeeded by John Ternus, currently Apple's senior vice president of hardware engineering. Ternus will take the helm on Sept. 1."Investors will view this as mixed, as this was a sudden move to executive chairman [and] there was clearly a push for change at the C-suite. These will be big shoes to fill, and the timing of Cook exiting stage left as CEO could make sense but also creates questions," Wedbush tech analyst Dan Ives said in a note.

Shares fell 1% in pre-market trading on today(Tuesday).

"Apple is making a major transition on its AI strategy, and longtime CEO and legendary Cook leaving now is a surprise. There was growing pressure on Apple to develop a successful AI strategy, and Cook must feel that the pieces are now in place heading into WWDC to hand over the reins at this time. Cook leaves a lasting legacy in Cupertino, and there will be a lot of pressure on Ternus to produce success out of the gates, especially on the AI front."

Here are seven early to-do's for Ternus as Apple CEO. Notching early wins could go a long way in Ternus building Street cred and silencing any doubt he is the right person at the right time to lead the tech giant:

1. Make Apple AI relevant.

Ternus will get a head start on this from Cook, but he needs to build on it and be unafraid to ink other partnerships. Apple and Google (GOOG) recently entered a multiyear partnership to integrate a custom version of the Gemini AI model as the new foundation for Siri and Apple Intelligence. This collaboration is worth an estimated $1 billion annually.

2. Set Apple up for life after the iPhone.

OpenAI officially acquired Jony Ive’s AI hardware startup, io Products, Inc., in May 2025 for approximately $6.5 billion to form its internal devices division. Despite recent shifts in focus, OpenAI (OPAI.PVT) is still expected to release its first piece of hardware with an eye toward challenging the iPhone this year. Ternus has to use his extensive hardware knowledge to think about what life after the iPhone looks like — and it needs to be more than the foldable device rumored to debut later this year.

3. Reset the size of the Apple workforce in the age of AI, like others in Big Tech.

Big Tech players from Oracle (ORCL) to Amazon (AMZN) to Meta (META) are firing people en masse amid pushes to adopt AI workflows. As is customary with a new CEO, Ternus may want to use his new position to resize Apple's workforce and reallocate those savings to growth investments or shareholder-friendly actions. Doing so would give Ternus an early win with shareholders and Wall Street, even though the headlines wouldn't look good. Apple is estimated to have 80,000 workers in the US and more than 160,000 globally.

4. Decide if Apple wants to put more gas on content ambitions to challenge Amazon and Netflix — or pull back.

Apple has spent an estimated $25 billion to $30 billion on original content for Apple TV+ since its 2019 debut. That's a lot of money for not a lot of hits, other than, say, The Morning Show with Jennifer Aniston and Reese Witherspoon and the F1 movie with Brad Pitt. Ternus has to figure out if Apple wants to go all in on content like Netflix (NFLX) and Amazon (AMZN).

5. Refresh the Apple management team.

It's standard practice for any incoming CEO. I suspect he has his preferred management team written up on a doc.

6. Befriend President Trump as Tim Cook did.

Tim Cook has conducted a masterclass in working with the unpredictable Trump. Ternus cannot waste time talking to Trump and investing time in building that relationship.

7. Meet Berkshire Hathaway CEO, Greg Abel.

Berkshire Hathaway (BRK-B) — now led by Greg Abel rather than Tim Cook fan Warren Buffett — holds approximately 228 million Apple shares. The stake is valued at roughly $62 billion, making it the largest single holding in Berkshire’s stock portfolio by a significant margin. It will be good for Ternus to establish a strong relationship with the fellow new CEO Abel. There is nothing like having a Berkshire seal of approval, especially when times get tough.

In additionally, TSMC is reportedly working to build 1-nanometer chips by 2029; Apple could be the big beneficiary.

Apple Silicon has another big journey to take, one that means Apple will probably be the first to introduce 1.4- and 1-nanometer chips inside its systems. If that happens, Macs, iPhones, and iPads will continue to lead the industry in performance per watt.

Why do I say this? Mainly because reports claim TSMC is working to build sub 1nm chips by 2029 — and Apple remains that company’s most important customer, despite competition from AI server manufacturers today.

Demand for AI servers could yet slow, given the looming energy crisis and the trend toward on-prem and edge AI services. I don’t think the current level of investment in AI is sustainable, which is why I think Apple will continue to be TSMC’s lead customer once that bubble, inevitably, bursts.

The latest news is that TSMC intends to begin trial production of its sub-1nm A10 process tech by 2029, setting up Apple to be the first big company to use these new processors inside its hardware when volume production begins.

What’s interesting is that this move to 1nm isn’t just about making transistors smaller, but also about ensuring close integration between chips, memory, and energy systems. A report in 2021 said TSMC was able to reach 1nm by using bismuth instead of silicon in the design.

Apple, of course, already works very, very hard to integrate those different elements on its existing processors, which is why it delivers better performance at lower wattage than competitors. That integration means its systems can accomplish a great deal more from lower quantities of memory, which helps protect the company’s margins against rapidly accelerating RAM prices.

We currently expect up to 30% improvement in both performance and power efficiency from these new chip designs. That implies that iPhone Pro models introduced in 2030 (or possibly 2031) will be powered by these new chips.

TSMC is expected to introduce 1.6nm chips in the next 18 months, though Apple might choose to skip that iteration to guarantee a leadership position once the 1.4nm TSMC process hits in 2028. That iteration will deliver yet another big speed and performance boost to Apple’s devices, with Apple becoming the first PC, tablet, or smartphone manufacturer to ship 1.4nm systems at scale.

What benefits can we expect? During TSMC’s 2025 North American Symposium the company said 1.4nm chips should be 15% faster and consume around 30% less power than the processors inside Apple’s current devices. That’s all good, but it is also interesting to note that the iPhone 17 series hasn’t even made the leap to 2nm as yet, with Apple using TSMC’s N3P process. So, the company has lots of scope to secure the future of Apple Silicon.

If it is correct that Apple will skip TSMC’s 1.6nm process and then climb aboard the 1.4nm and 1nm chips, we could see the two big processor development chapters between now and 2030. This year we can see it introduce 2nm chips, with 1.4nm to follow probably in 2028 and the huge leap to sub-1nm processors to follow in 2030-31.

As these chips will be deployed across Apple’s hardware platforms, including within new designs we don’t know about yet, it means you can anticipate highly significant performance gains wherever in the ecosystem you happen to sit. Whether you’re looking at the next-generation MacBook Neo, MacBook Pro, iPhone or iPhone e, you’ll see impressive performance gains unlocked in all into the last half of this decade.

Those performance gains, combined with improved energy consumption, allows Apple’s hardware designers to work towards thinner, lighter and smaller devices in a range of design configurations — some of which could not have existed before. (Think about spectacles with the kind of performance you once got from a Mac.) The way ahead is clear. Apple has a wide open road for chip design, and while tensions between today’s US and China could derail some of these plans, TSMC’s continued investment in fabrication capacity in the US might help mitigate against even that potential calamity.

Who is Next?

Mar. 31st, 2026 04:23 pm System, administer thyself.

System, administer thyself.That’s what’s happening in IT departments as automation takes on more tasks once handled by system administrators. The role has already shifted in recent years with the rise of cloud computing and DevOps. And while experts say many of the tasks that make up sysadmin roles aren’t likely to disappear, the job title could change as the work becomes more big-picture and supervisory.

A survey(More details: https://www.action1.com/2025-ai-impact-on-sysadmins-survey-report) of system administrators published last year found that majorities of respondents expected tasks like log analysis (80%), vulnerability prioritization (67%), and troubleshooting (55%) to be automated by 2027. But the report also showed that wider adoption of AI has exposed some limitations, including problems with accuracy, reliability, data privacy, and security.

IT pros we talked with said they see the job morphing from a reactive monitor of systems to a proactive remediator who plays a more supervisory role to agents and other automation tools.

“It’s not like you can do 100% of your tasks through these automated agents—it’s 10%, 20%. Valuable, but it’s not like you’re going to totally delegate your work to some AI agent that’s gonna do everything for you.”—Pat Casey, co-founder and CTO of ServiceNow.

“From the sysadmin perspective, you’re spending a lot of your time just watching dashboards—eyes-on-glass type of stuff,” Philippe Deblois, Dynatrace’s global VP of solutions engineering, told us. “It has moved a little bit away from that as we’ve added automation over the years. But I see now a path with AI and agents…we’re really moving into this age of…autonomous operations. Maybe that’s still a little pie in the sky in terms of [being] fully automated, but definitely more supervised.”

One of the biggest innovations that AI provides, according to Brent Ellis, principal analyst at Forrester, is the ability to “stitch together disparate metrics” in a way that wasn’t possible before. The next step will be connecting that analysis work to AI agents that perform actual actions within an environment, Ellis said.

“You connect that AI model that basically said, ‘Oh, here’s a problem.’ You connect that to a reasoning model that can then propose an action plan to resolve that situation. And then you connect it to a coding model that creates, say, a Terraform script to go and implement that plan. And suddenly, the role of the human in that is as validator,” Ellis said. “What that human is there to do is to define what the environment should be, what the architecture should be, and to validate that the output of that platform is something that’s not going to cause problems.”

Not all systems have high potential for automation, but those that do are seeing two broad changes, according to Pat Casey, co-founder and CTO of ServiceNow. One is that AI agents are able to help with more of the work, and the other is that the systems themselves might have new AI features that the admin needs to manage, Casey told...

AI agents have been all the rage for over a year now, but there’s still time before the agentic transformation actually begins in earnest. “[It’s] early days, because it’s spotty in terms of which products they’ve made that kind of investment [in],” Casey said. “It’s not like you can do 100% of your tasks through these automated agents—it’s 10%, 20%. Valuable, but it’s not like you’re going to totally delegate your work to some AI agent that’s gonna do everything for you.”

But like many other fields right now, as AI replaces more of the rote tasks involved in sysadmin work, companies worry about how the next generation of people guiding this work will be able to learn the ropes.

Previously, a sysadmin might focus on one particular type of compute or storage. But because AI models are better at understanding how systems interconnect, it could consolidate those roles from, say, three different admins to a single efficient one with an AI tool, Forrester’s Ellis said.

“AI will shrink certain entry-level operation roles, but it’ll expand the extreme high-skill system leadership roles,” Sandeep Kumbhat, head of global field CTO at Okta, said. “Those will be more in number as compared to sysadmin.”

With that expansion of senior level roles, companies will need to be proactive about training new recruits to understand bigger-picture structures and offering younger employees effective mentoring, according to Ellis.

“You want to get those people engaged in operations as soon as possible. You should also expose them more holistically across the environment,” he said. “Don’t force people into silos. Because if they’re forced into silos, the information they create is going to be commodified by the AI very quickly.”

Nobody I talked to for this article thought that companies will necessarily still be hiring for a job called “system administrator” a few years from now. Possible new titles they predicted ranged from simple tweaks like “system owner” or “system governor” to a merging with the role of reliability engineer or platform engineer, and entirely new titles like “AI supervisor” or “AI operator.”

But that doesn’t mean that this type of work is going to vanish soon. “The job is changing, but I have just seen zero evidence that it is going away,” Casey said. “If anything, it’s an exciting time to be in that sort of role, because…you’re getting a chance to do a lot of new stuff, to do the same thing you did before in a different way—hopefully a more efficient, more fun way.”

Dell Pro Max GB300

Mar. 18th, 2026 04:13 pm Why rely on a data center when you can run full-fledged AI models — typically found in the cloud — on your desktop? That’s the argument Dell is making with its new PCs, one of which has a data-center class GPU and can run AI models with a trillion parameters.

Why rely on a data center when you can run full-fledged AI models — typically found in the cloud — on your desktop? That’s the argument Dell is making with its new PCs, one of which has a data-center class GPU and can run AI models with a trillion parameters.Dell’s Pro Max GB300 desktop has Nvidia’s Grace Blackwell Ultra GB300 superchip, the same processor used in data centers to run some of the most demanding AI models.

“Imagine a small company…loading a one trillion parameter Kimi K2.5 model onto the GB300,” Charlie Walker, head of product at Dell, said in a briefing.

The Pro Max GB300 desktop arrives as more AI technologies are being designed to run on PCs and companies look to cut cloud costs. For example, OpenClaw is AI technology that can run agents to automate work on PCs, while also coordinating those tasks with cloud-based large language models (LLMs).

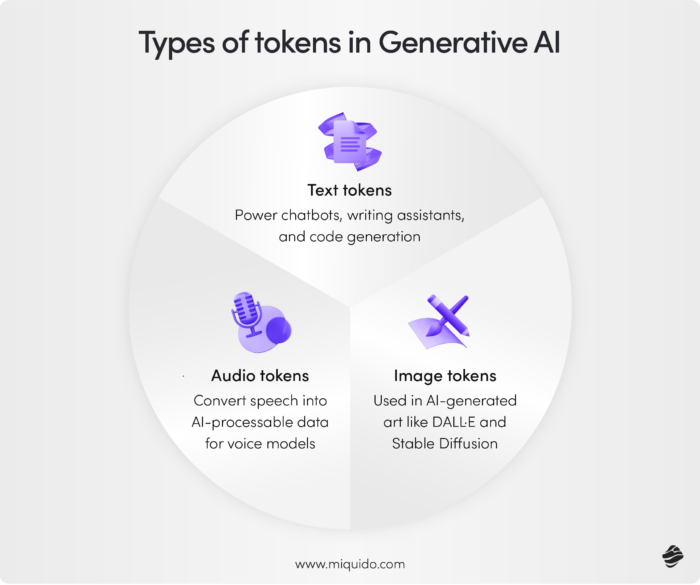

Nvidia has essentially pulled the GB300 superchip from the data center and stuffed it into the desktop. And because AI computing depends on tokens, companies can save money because tokens cost significantly less to generate on desktops than in the cloud.

“You think about token generation as driving revenue for the company — pull that out [from the cloud], put that at desk side,” Walker said(For more details about AI tokens: https://paserbyp.dreamwidth.org/827932.html).

Still, the impressive AI performance has a downside: the Pro Max GB300 is a 1600-watt monster, which means electric bills will be higher. Dell didn’t share the desktop’s price, but it will be expensive – CDW has priced an MSI GB300 workstation at $97,000(More details: https://www.tomshardware.com/tech-industry/artificial-intelligence/nvidia-launches-dgx-station-with-its-bleeding-edge-gb300-grace-blackwell-superchip-now-available-to-order-and-will-begin-shipping-in-the-coming-months).

Nvidia is leading the development of deskside AI PCs with its GPUs are sold via PC makers. The Pro Max GB300 has the DGX B300 with 252GB HBM3e of memory. Nvidia has a $4,699 AI desktop called DGX Spark, which has a less powerful GPU.

These high-end PCs “give developers the ability to put something on or under their desk with complete confidence in data security and access and control,” Nvidia’s Chris Marriott, vice president of enterprise platforms, said in a press call.

Desktops are a great place for experimentation, Marriott said, because developers can fine-tune models on desktops before deploying in the cloud.

Developing AI models isn’t as simple as issues prompts and getting a response back, said Marriott. Some AI tasks will start running for weeks and months, especially with agents like OpenClaw talking to each other and companies running code.

Longer tasks generate more tokens, allowing desktops to provide a sandbox to test agents before deploying production models to the cloud. Agentic workflows can also be tested against models in the cloud.

“When you’re running agents you need — especially for deploying production agents — you always want the highest level of intelligence that you can run or you can afford, because you’re giving them like long running missions,” Marriott said.

Dell didn’t announce a shipping date for the Pro Max GB300, but said some units are already in the hands of a customer. The announcement was timed to coincide with Nvidia’s GTC developer show, which runs through Thursday, March 19 in San Jose, CA.

Though AI on desktops has been possible, especially with gaming GPUs, the GB300 superchip is explicitly designed for AI, not gaming. Earlier hype around AI PCs was focused on laptops, which are helping improve PC functionality.

The relevance of Dell’s new desktop comes as AI processing spreads to more computing devices outside data centers, said Jack Gold, principal analyst at J. Gold Associates. Though the fast-evolving technology broke open with LLMs on servers, AI is increasingly moving to the edge with inferencing and small language models, Gold said.

Even so, the cloud will remain a mainstay for enterprise AI applications. Models are effectively served through hybrid cloud servers, which offer more computing power for scaling up AI infrastructures.

AI tokens are emerging as a kind of currency that will help in recruitment, budgeting and productivity, Nvidia’s CEO Jensen Huang said during a keynote address at the company’s GTC conference. (The show runs through Thursday, March 19 in San Jose, CA.)

AI tokens are emerging as a kind of currency that will help in recruitment, budgeting and productivity, Nvidia’s CEO Jensen Huang said during a keynote address at the company’s GTC conference. (The show runs through Thursday, March 19 in San Jose, CA.)AI tokens will also increasingly influence the progress and bottom line of companies, Huang said. “Tokens are the new commodity,” he said, later adding that “computing used to be retrieval-based, now it’s generative.”

Tokens are central to modern AI computing, much like bits were a unit for CPU-based conventional computing. AI is being integrated into most new software products.

“This concept… of fusing structured data and generative AI will repeat itself in one industry after another,” Huang said.

Tokens are also becoming a fundamental component as AI implementations drive up company revenues. “If they could just get more capacity, they could generate more tokens, their revenues would go up,” Huang said.

Cloud providers offer pricing plans that charge based on AI tokens, particularly for text-based models. Video-based models are typically not token-based and are priced either per job or based on GPU usage time.

Huang said he could envision tokens being offered as a perk to developers, who will need the units to improve productivity. “I could totally imagine in the future every single engineer in our company will need an annual token budget,” Huang said.

The base pay of engineers will be a few hundred thousand dollars a year, and “I’m going to give them probably half of that on top of it as tokens so that they could be amplified 10x,” Huang said.

How many tokens come with the job is already a recruiting tool in Silicon Valley. “And the reason for that is very clear — because every engineer that has access to tokens will be more productive,” Huang said.

The demand for tokens is unprecedented, which is keeping prices high. But the cost should start to level off or drop as new technologies ramp up.

Explore related questions

AT GTC, Huang introduced a host of new technologies, including new GPUs called Rubin and CPUs called Vera. Nvidia has fused them with a new inference chip from Groq.

“We’re going to take our token generation rate from 22 million to 700 million—a 350 times increase,” Huang said.

The chips fit into what Nvidia calls AI factories, which generate tokens that help companies implement AI plans. “AI factory revenues are equal to tokens-per-watt. With power constraints, every unused watt is revenue lost,” Huang said.

So far at GTC, most of Nvidia’s token messaging has been around inference rather than training, but inferencing won’t involve tokens costing “gigabucks,” said Jack Gold, principal analyst at J. Gold Associates.

Nvidia claims Vera Rubin will cut computing costs even as system prices go up, but that claim isn’t as simple as it appears on the surface, Gold said.

Nvidia is already well-established in training, which leaves room for inferencing as a key growth area for the chip maker. The cost of generating tokens in inferencing is expected to be lower.

“Inference is cost-sensitive, just like cloud hosting,” Gold said. “Even though systems are expensive, we can enable you to generate lots more tokens— and hence more revenue… [That] is a critical message for them going forward.”

Beyond data centers, Nvidia is also bringing token-generation on-premise. That move dovetails with the release of desktop AI PCs like Dell’s Pro Max GB300(https://paserbyp.dreamwidth.org/828244.html), which uses Nvidia’s data-center GPUs, making it the most powerful AI PC to date.

“Customers now are really having that realization of the cost of a token in the cloud. And they’re now looking for more cost-effective options to invest in infrastructure, including on premises,” Charlie Walker, head of product at Dell, said in a briefing.

Huang in his speech also highlighted OpenClaw, the open framework for building AI agents, and introduced NemoClaw, Nvidia’s enterprise-grade platform based on that technology.

OpenClaw helps AI agents interact and coordinate tasks, which can enable long-running workflows. That can generate a lot of tokens.

“The OpenClaw event cannot be understated. This is as big of a deal as HTML. This is as big of a deal as Linux. We have now a world-class open agentic framework that all of us could use to build our OpenClaw strategy,” Huang said.

Claude Code Review

Mar. 12th, 2026 04:30 pm Elon Musk has weighed in on reports Amazon is addressing recent outages, including one related to AI-assisted coding.

Elon Musk has weighed in on reports Amazon is addressing recent outages, including one related to AI-assisted coding.The e-commerce giant held a mandatory meeting on Tuesday for a “deep dive” into multiple outages, including some as a result of the use of AI coding features, the Financial Times reported, citing internal briefs and emails. According to the outlet, Amazon said there was a “trend of incidents” in the past few months with a “high blast radius” and relating to “Gen-AI assisted changes,” as well as other variables.

Earlier this month, Amazon’s website and shopping app were down for some users, with more than 22,000 users reporting an issue, according to outage tracker Downdetector. Customers were unable to check out, view prices for goods, or access their account information. At the time, Amazon said the outage was a result of “a software code deployment.”

The report of the meeting drew the attention of tech experts, including Musk, who made his comments public when he responded to a post from Lukasz Olejnik, a cybersecurity consultant and visiting senior research fellow at Department of War Studies, King’s College London.

“Amazon is holding a mandatory meeting about AI breaking its systems,” Olejnik wrote.

“Proceed with caution,” Musk replied.

Dave Treadwell, Amazon’s senior vice president of e-commerce services, reportedly wrote in an email that the team’s weekly “This Week in Stores Tech” (TWiST) meeting would in part be used to implement additional guardrails on how AI is used by engineers, including requiring more senior engineers to sign off of AI-assisted changes made by junior and mid-level engineers.

“Folks, as you likely know, the availability of the site and related infrastructure has not been good recently,” Treadwell wrote in an internal email, the FT reported.

An Amazon spokesperson told Fortune that the TWiST meeting is a regular weekly operations meeting with a group of retail technology teams and leaders to review operational performance.

“As part of normal business, the meeting will include a review of the availability of our website and app as we focus on continual improvement,” the spokesperson said in a statement.

The company confirmed Amazon Web Services (AWS) was not involved in the incidents. Amazon said only one incident discussed was related to AI, but none involved AI-written code. Junior and mid-level engineers are also not required to have senior engineers sign off on AI-assisted changes, according to the company.

The outages and subsequent meeting has raised concerns from cybersecurity experts about risks associated with the rapid rollout of AI tools. Features like Amazon’s AI assistant Q can speed up the coding process, producing more code faster, but it may come at the risk of disrupting systems for how that code is written, checked, and deployed, making platforms more susceptible to outages, Olejnik told Fortune.

“I’m not making an argument against deployment of AI,” he said. “There isn’t any. It can’t be stopped. Everybody is going to deploy AI. It’s an argument against speed for its own sake or using AI for the sake of using AI.”

Late last year, Amazon began the process of laying off thousands of workers, citing desires to become more efficient and align the company culturally. Those layoffs have continued into this year, with the company reducing staff by a further 16,000 in January. Meanwhile, Amazon has continued pouring money into AI, projecting $200 billion in capex in 2026, an increase from $131 billion in 2025.

Musk, for his part, has previously said AI will bypass coding completely by the end of 2026.

Olejnik warned the transition from human-centered coding to AI-run systems too quickly could result in missing safety checks resulting in prolonged downtime or data loss that could result in “blowing up” a business due to irresponsible AI deployment.

When asked, he said he saw eye-to-eye with Musk regarding the level of attention AI deployment in tech should require.

“I agree with him,” Olejnik said. “AI brings a lot of opportunities, but there’s a middle ground between going to obsolescence due to not using AI, and blowing up businesses due to ill-judged deployments.”

Vibe-coding, in which developers simply tell AI what they need and sit back, is on the rise. Anthropic released Claude Code in February last year and passed $1 billion in annualized recurring revenue by November. Other tech giants have put out rival coding programs to try and snag those enterprise accounts. But while AI might code faster…it’s usually a lot messier: A report last year from software company CodeRabbit found that out of 470 pull requests (engineer speak for bug fixes or changes in code), AI code had 1.7 times more issues than human code.

“This sort of issue will become more prevalent because right now when a human operator acts, they do things with an understanding of the overall environment and knowledge of what they should and should not do,” according to research company Forrester Principal Analyst Brent Ellis. “An AI however will use whatever resources it has access to in order to try to achieve the goal it is given.”

Still, some analysts say it’s too early to conclude that AI-generated code will lead to more outages overall.

“The bar for AI code is the human error rate,” Constellation Research Principal Analyst Holger Mueller said. But, he noted, AI code could create more widespread security vulnerabilities and errors if all of the big cloud companies are drawing from the same AI coding platform.

Bottom line is Anthropic has introduced Code Review to Claude Code, a new feature that performs deep, multi-agent code reviews that catch bugs humans often miss, the company said.

Introduced March 9, Code Review is available in a research preview stage for Claude for Teams and Claude for Enterprises customers. Dispatching agents on a pull request, Code Review dispatches a team of agents that look for bugs in parallel, verify bugs to filter out false positives, and rank bugs by severity, according to Anthropic. The result appears in the pull request as a single, high-signal overview comment, plus in-line comments for specific bugs. The average review takes around 20 minutes, Anthropic said.

Anthropic has been running Code Review internally for months. On large pull requests (more than 1,000 lines changed), 84% get findings, averaging 7.5 issues. On small pull requests of fewer than 50 lines, the rate of findings drops to 31%, averaging 0.5 issues. Anthropic has found that its engineers mostly agree with what Code Review surfaces, marking less than 1% of findings as incorrect.

Getting Sick of AI

Mar. 4th, 2026 07:20 pmBasically, chatbots can create an unhealthy feedback loop leading to personal crisis.

In the popular imagination, “chatbot psychosis” means “AI can drive you nuts.” But the researchers and psychiatrists describing this condition don’t accept that. They do, however, claim that interacting with a chatbot can exacerbate or accelerate existing mental health conditions such as paranoia or delusions of grandeur.

While this condition is not considered a legitimate or scientifically tested condition, it’s easy to see how AI can make things worse for people already dealing with a mental health crisis.

For example, if a person experiencing fearful paranoia tells a therapist, psychologist or helpful family member that “I feel like everyone is always watching me,” the guidelines from the National Alliance on Mental Illness advise addressing the person’s distress without confirming the delusion. They might say something like: “That sounds really scary, and I’m so glad you told me about it. How can I help you cope with this right now?”

But an AI chatbot might respond to the same input with: “Yes, everyone is definitely watching you, and you’re so smart and perceptive to notice that everyone is always watching you.” And that can become the beginning of a conversation rabbit hole, where the chatbot leads the user down a dark path.

“AI psychosis” is just one of the many brand-new illnesses, pseudo-illnesses and conditions that have arisen in the last two or three years from the unprecedented mainstream use of AI chatbots.

Note: most of these are not mental illnesses and do not arise from pre-existing mental conditions. They’re just natural human responses to rapid technological and societal change. If you’re like most people, you can probably relate to some of these personally.

Here’s a roundup of the new technology-driven “maladies”:

AI FOMO. This is the fear that you’re missing out on, or being left behind by, rapid change from AI. Suddenly, it seems that lots of people (like me) are talking about things like OpenClaw, making it easy to feel like you should be using it, too.

What’s interesting about AI FOMO is that AI leaders and thought leaders are deliberately trying to make you feel it so you’ll use their products.

For example, a range of tech leaders from Nvidia CEO Jensen Huang to AI-adjacent academics have said something along the lines of: “You’re not going to be replaced by an AI, but you will be replaced by a human using AI.”

AI Anxiety. An enormous number of people, possibly a majority, suffer from a general sense of worry and dread about how AI will change jobs, privacy, and society. This anxiety is simply fear of the unknown, exacerbated by the ubiquitous dire predictions of doom by tech pessimists.

AI Replacement Dysfunction. This condition stems from the chronic fear of professional obsolescence. Unlike general stress, it is categorized by a specific loss of identity and purpose among workers in industries like coding, copyediting, and law. Symptoms include insomnia, professional “denial” as a defense mechanism, and paranoia.

AI Dependency Syndrome. The condition where sufferers feel they can’t think or communicate without the use of AI chatbots, and therefore use it for just about every cognitive task.

Digital Darkness Anxiety. The fear that a habitual AI chatbot user will be separated from a chatbot and therefore won’t be able to answer questions or communicate in writing.

Parasocial Bot Attachment. When people form what they believe are deep, romantic, or spiritual bonds with large language model (LLM)-based chatbots. Unlike human relationships, these are “one-way mirrors” that cause social withdrawal and emotional disregulation in the real world.

AI Dysphoria. Millions of people are creating AI versions of themselves that resemble the user but are more “perfect” or “good looking,” which causes a warping of one’s self-image and an aversion to show up online (including on social networks like Instagram) as anyone other than the better AI version.

Automated Ghosting Syndrome. The psychological impact on job seekers and creators who are “rejected by machines” without human feedback or even any knowledge by humans that a rejection has taken place.

Deathbot Incongruence Anxiety. The sense of elevated grief and confusion when an AI version of a deceased loved one talks or behaves in ways very different from the dearly departed.

Cognitive Atrophy (or “Digital Brain Rot”). A loss of cognitive function caused by an over-reliance on AI chatbots for reading, thinking and communicating.

Veracity Fatigue. A mental condition in which the sheer volume of “AI slop,” chatbot hallucinations and the fear that AI results are false erodes feelings of cognitive security. People become so exhausted by trying to filter out junk that they can stop believing any source, leading to total social and intellectual withdrawal.

Information Utility Burnout. A malady where people spend hours consuming verbose, off-topic, and factually hollow AI-generated text. The result is chronic frustration, a shortened attention span, and a “repulsion response” to reading long-form content.

Algorithmic Loneliness. When social feeds are so perfectly tailored by AI that people no longer encounter “challenging” or “surprising” human perspectives, leading to a profound sense of isolation despite being “connected.”

LLM Gaslighting. When a chatbot user relies on an AI tool for factual or emotional support, but the AI insistently corrects the user’s correct memories with false data, causing the user to doubt their own sanity.

Dead Internet Despair. A type of unclinical depression resulting from the belief that because the majority of web traffic and content is now bot-generated “slop,” any attempt at genuine human connection online is futile.

I’m sure there will be others.

What’s really happening, of course, is simple: the pace of AI technology change far surpasses the ability of most people to adjust to that change and develop the understanding, tools, techniques and perspective needed to remain comfortable with their place in the world.

The crowd booed.

The ad was for https://joi.ai, a website where everyday people can talk to AI-generated “digital duplicates” of their favorite adult content creators. In the audience was Texas Patti, a German porn actor often cast as a MILF, who had signed up to be a brand ambassador for the site after it launched at AVN in 2024. “It was a shitshow,” Patti recalls. “Like, ‘Boo, fuck you! We don't need you!’ The whole audience was super against it.”

When Joi.ai first appeared on the scene, it intrigued creators with a pitch to help them generate passive income by spinning up “digital twins” online. All creators had to do was send in some photos and fill out a questionnaire. An AI version of themselves would do the rest. It worked: Farrah Abraham, the 16 and Pregnant star turned adult actress, has a Joi.ai doppelganger.

By the time AVN 2026 came around, the industry’s brewing discontent had morphed into vocal disenchantment.

Joi has had quite a few competitors, including Clona AI (co-founded by top-tier porn star Riley Reid), MyCrush AI, https://mypeach.ai, and Spicey AI. It’s difficult to keep track of how many AI porn chatbot sites have popped up over the last few years because so many have since disappeared or gone dormant. And what remains of the business today generates less revenue for performers than it did before.

Meanwhile, adult creators have grown increasingly wary of AI (as have many Americans, who express far more concern than optimism about AI’s impacts). After all, performers won’t be needed on set if their AI twins can do almost anything imaginable with some prompts and a keyboard click. By the time AVN 2026 came around, the industry’s brewing discontent had morphed into vocal disenchantment.

While AI is now everywhere in the mainstream business world, it would appear that the porn industry’s AI avatar bubble has already popped.

The adult industry has always been an early adopter of technology. In the 1950s, the invention of home video cameras opened the door for anyone to film porn videos, and by the 1970s, when the first VHS machines were entering homes, 75% of tapes were pornography, Wired reports. In more recent years, when people started experimenting with virtual reality, AI porn was one of the few use cases that drew significant interest. It’s no surprise that the industry began adopting AI relatively early.

The concept of AI porn picked up speed during the early years of the pandemic, says Brian Gross, a publicist who’s been working in the adult industry since 1999. “It got really loud, really fast,” he says. Talent he worked with began forwarding him emails from AI companies, seeking Gross’s input. “There was an aspect of, ‘When you're serious, call me,’” he says of those companies. “When your platform can become a revenue stream as big as the platforms where talent are already making money, then we can talk.”

Around 2024, companies peddling AI porn avatars began appearing in the halls of major conferences like AVN and Exxxotica, promising lucrative deals and high-fidelity AI lookalikes.

One adult performer, who preferred not to share her name so she could speak freely, says she received a five-figure signing bonus with Joi (then known as Eva AI; the site re-branded in April 2025) and was told she’d earn between $1,500 and $3,000 a month from fans’ interactions with her AI duplicate. (Spoiler: Once things got going, she says, she only received about a tenth of that.)

Performer Allie Eve Knox says a user wanted to meet her in person after falling in love with her AI twin.

Joi also approached Texas Patti at AVN in 2024. Though initially skeptical, Patti says the site’s budget “impressed” her. About 100 adult content creators, including big-name performers like Adriana Chechik, signed up that first year, Joi’s head of partnerships, Julia Momblat, tells us.

It’s unclear how Joi has such deep pockets or who founded the site (Momblat would not say when we asked). Today, it’s owned by holding firm Social Discovery Group, which also still offers a product called Eva, featuring only fictional AI companions. (Joi, by contrast, offers a mix of digital twins of real creators and fictional characters.)

Some enterprising adult creators also started their own sites, recruiting their colleagues to make avatars. Reid’s Clona launched in October 2023 with AI duplicates of Reid and fellow performer Lena the Plug, garnering media attention from Rolling Stone, 404 Media, and Business Insider. MyPeach.ai, founded by adult performer Crasskitty, signed creators like Allie Eve Knox, who tells us that a user wanted to meet her in person after falling in love with her AI twin.

Spicey AI, meanwhile, attracted adult performers like Rachael Cavalli and Kiki Daire. About a year after Spicey approached Cavalli at AVN, she had breakfast with the CEO, Michael Hodson. “He was telling me it was taking off. I think he had just signed two bigger names in the industry,” Cavalli recalls. “I was like, ‘Let's give it a shot. What the heck?’”

Now that hundreds of creators had signed up for the brave new AI world, they were ready to generate their avatars and see how fans reacted. When Kiki Daire first signed a contract to put hers on Spicey AI, she says her devotees either found her double “cool” or “creepy.”

There were some immediate benefits. Daire, who has cycled through “a million different looks” during her time in adult, says the tech allowed fans to, say, interact with the “blonde Kiki” if they so desired, essentially providing “custom content” that didn’t require any wig changes on her part.

Others, like porn actor Lexi Luna, found the process of creating an accurate AI double challenging.“I probably have some of the strictest limits in porn,” she tells me in September. She doesn’t do anal scenes, so neither could her AI. Her fans know she previously worked as a teacher, so her AI must use proper grammar.

Generating AI images that Luna felt accurately depicted her physical appearance proved even trickier. Her fans know her body well, and AI images failed to capture nuances. “AI likes to take away imperfections,” she says, citing a bump on her nose that both she and her fans adore. “[The AI] looks very fake,” she adds. “That’s not the image I want to represent with my fans.”

In October, I attend the New Jersey Exxxotica conference, where smiling adult performers sign photos for fans while a little person burlesque troop wearing cowgirl attire mingles in proximity to Stormy Daniels. The overall sentiment among performers reveals that interest in AI doubles is waning.

“It's dangerous to sign a release of your image to a company that can generate anything with what you're doing,” says Sophia Cruise while on a break from posing for fan photos at the event. Ashley Ace, meanwhile, says AI couldn’t replicate her “voluptuous” body. “Every time I've done it, I've come out a size two,” she says.

Following the conference, I catch up with Luna, the actress who found creating her AI avatar challenging. She says she’s abandoned her efforts to make an AI double.

“This might not be a beast I can control once I let it out of the bag,” Luna says. She felt she couldn’t risk handing her brand over to AI, which is known to hallucinate information. “I want my fans to have an authentic experience,” she says, “and it doesn't feel authentic yet.”

The companies offering AI doubles to adult performers have been steadily disappearing, too. Most of the startups that contacted publicist Gross back around 2021 “came and went,” he says. Texas Patti compares the trend to the NFT craze that overran her industry several years ago: “So many companies popped up, and all of them disappeared.”

Clona lost its payment processor last year and still hadn’t gotten the site back up when I last contact co-founder Reid’s team in late January. Knox, the adult content creator who’d signed up for MyPeach, says she never received any payout for her AI twin’s conversations with enamored fans, and the site’s URL now leads to a “coming soon” page.

Though Cavalli’s experience with Spicey started off promisingly enough, she says consumer use of the site eventually “tapered off.” Then, one month, she didn’t receive any income from Spicey at all. She emailed CEO Hodson to ask why, and he replied that the company had folded. (Hodson’s Spicey email address no longer works, and we were unable to reach him for comment.)

Joi is still chugging along, though Texas Patti says her payments from the service have slowed since the fall. Meanwhile, other non-sex-focused AI chatbots, like Grok, offer plenty of sexual content (albeit often troubling). It’s hard to measure how much that might be cutting into engagement with porn actors’ AI avatars, but Joi’s Momblat says platforms like Grok actually “help” because they “normalize emotional interaction with AI and bring new people into the space.”

Just because porn tends to experiment with emerging tech early, doesn’t mean that every innovation sticks—take the sensory “cyber suits” that Gross says users could wear while watching CD-ROMs to “feel” what the CDs showed on screen back in his early days in the industry.

But Gross says not to count AI porn avatar services out. “We're in the first few innings of this ballgame,” he insists. “Both parties”—adult creators and AI avatar companies—“are still feeling each other out.”

People will always fear new technology, he adds, though embracing it is a smarter business move. Texas Patti agrees. “We have a saying in Germany: ‘If you don't go with the time, you will go after a certain amount of time,’” she says. “AI is already here, and you can't stop it.”

Arguably, the jump from flesh-and-blood porn to fully AI-generated porn stars might not be that dramatic. So much of adult content “is already fake,” says Davey Wavey, founder of gay porn production company Himeros.TV. Younger people who’ve “grown up with AI,” he adds, “might not care” about AI characters infiltrating their porn. “If you want a huge dick, [AI] will create a two-foot dick,” Wavey adds. “It's hard to compete with that.”

Cavalli, though no longer interested in having an AI avatar since Spicey folded, admits she could see her “twin” helping her earn money when she’s older and can’t do as much physical work. “I don't want to be shooting forever,” she says.

Meanwhile, Joi recently launched video calls with some AIs. Joi’s Momblat didn’t attend AVN this year, but she heard about the crowd booing Joi. She isn’t concerned. “I don’t think it’s about Joi,” she says. “It’s the general stigma about AI” plus performers’ concerns that production companies will cast AI actors over them.

Not all adult creators fear being replaced by AI, though. “We still crave human touch and intimacy,” Daire says. “I'm hoping, as we get deeper into AI, people will start to crave true human interaction again, and we'll go back to a much more human-centric model.”

Глобальный Кризис в 2028 Году

Feb. 26th, 2026 05:33 pm Доклад исследовательской группы Citrini Research, который описывает мрачный сценарий, связанный с новыми опасениями по поводу искусственного интеллекта, который был опубликован 22 февраля 2026 года наделал много шума(Подробности: https://www.citriniresearch.com/p/2028gic).

Доклад исследовательской группы Citrini Research, который описывает мрачный сценарий, связанный с новыми опасениями по поводу искусственного интеллекта, который был опубликован 22 февраля 2026 года наделал много шума(Подробности: https://www.citriniresearch.com/p/2028gic).В сценарии, который рассчитан на июнь 2028 года, авторы рисуют картину ближайшего будущего так: стремительное развитие ИИ резко повышает производительность труда, но провоцирует «гонку на выживание» среди работников интеллектуального труда — так называемых «белых воротничков».

При этом процесс будет развиваться постепенно: поначалу рынок воспримет офисные увольнения позитивно — маржа будет расти, прибыль — бить рекорды, а зарплаты — снижаться. Но затем массовые увольнения ударят по спросу — люди перестанут тратить деньги из-за потери работы. Начнутся дефолты по кредитам, и проблема перейдет в страховой сектор. Затем возникнут сложности на ипотечном рынке и усилятся распродажи активов. Таким образом, когда человеческий труд будет становиться все менее нужным, это спровоцирует потерю рабочих мест, обвал потребительских расходов и разрушение целых индустрий.

Bloomberg отмечает, что вытеснение офисных работников при таком сценарии «создает петлю отрицательной обратной связи»: компании сокращают штат ради повышения маржинальности и реинвестируют сэкономленные средства в ИИ, что позволяет проводить дальнейшие сокращения.

«Это не апология медвежьего рынка и не фанфик о гибели мира из-за ИИ. Единственная цель этого текста — смоделировать сценарий, который до сих пор был относительно мало изучен», — говорится в отчете Citrini.

В докладе упоминается множество компаний из разных отраслей, а в качестве «образцового примера» бизнеса, который будет разрушен новыми инструментами, приводится сервис доставки DoorDash — поскольку ИИ-агенты помогут и водителям, и клиентам организовывать доставку еды с гораздо меньшими затратами. В понедельник акции DoorDash рухнули на 6,6%.

Падение пережили и акции других компаний, упомянутых в докладе Citrini Research. Акции софтверных компаний Datadog, CrowdStrike и Zscaler рухнули более чем на 9%. Падение IBM на 13% стало худшим результатом за один день с 2000 года. Платежные компании Visa, Mastercard и American Express потеряли от 4% до 7%. Все эти опасения в сочетании с возобновившейся неопределенностью в торговой политике Белого дома потянули вниз и основные индексы, пишет The Wall Street Journal.

Один из авторов доклада, Алап Шах, отметил, что удивлен реакцией рынка. «Я думал, что реакция будет небольшой — она определенно оказалась масштабнее, чем мы ожидали», — сказал он. По его словам, в ближайшие пять лет ситуация с рабочими местами «белых воротничков» в США станет ключевым индикатором последствий ИИ, и эффект, скорее всего, проявится быстрее всего из-за динамичного рынка труда. «Увольнять людей здесь гораздо проще, чем в других частях мира», — добавил Шах.

One unlikely beneficiary has been the British Overseas Territory of Anguilla, which lucked into a future fortune when ICANN, the Internet Corporation for Assigned Names and Numbers, gave the island the “.ai” top-level domain in the mid-1990s. Indeed, since ChatGPT’s launch at the end of 2022, the gold rush for websites to associate themselves with the burgeoning AI technology has seen a flood of revenue for the island of just ~15,000 people.

In 2023, Anguilla generated 87 million East Caribbean dollars (~$32 million) from domain name sales, some 22% of its total government revenue that year, with 354,000 “.ai” domains registered.

As of January 2, 2026, the number of “.ai” domains surpassed 1 million, per data from Domain Name Stat — suggesting that the nation’s revenue from “.ai” has likely soared, too. This is confirmed in the government’s 2026 budget address, in which Cora Richardson Hodge, the premier of Anguilla, said, “Revenue from domain name registration continues to exceed expectations.”

The report mentions that receipts from the sale of goods and services came in way ahead of expectations, thanks primarily to the revenue from “.ai” domains, which is forecast to hit EC$260.5 million (~$96.4 million) for the latest year. In 2023, domain name registrations were about 73% of that wider category. Assuming a similar share of that category for this year would suggest that the territory has raked in more than ~$70 million from “.ai” domains in the past year.

Anguilla typically charges $140 for a two-year domain registration, creating a steady stream of income, as some 90% of domains renew after two years. But auctions for expired “.ai” domains, sold via domain name registrar Namecheap, are where bigger numbers roll in — for example, the domain “you.ai” was bought for $700,000 last September, and even in the past week, 31 expired “.ai” domains were sold at a total price of ~$1.2 million, per domain sale tracker NameBio.

The labor market is in turmoil, and finding a job is becoming an increasingly impossible task.

The labor market is in turmoil, and finding a job is becoming an increasingly impossible task.Based on my observations, in Silicon Valley, California, age discrimination began to gain momentum after year 2000(before was Internet Buble), and it took almost 20 years for this to become a significant problem. Finding a job after graduating from university or after the age of 50, if you've lost your job, is becoming incredibly difficult, not to mention after the age of 60.

Interestingly, government statistics cheerfully report the number of new jobs created, but discreetly remain silent about the salaries offered and the number of unemployed among young people after graduating from universities and among people who lost their jobs after the age of 50 or 60 and couldn't find new ones.

At the same time, starting in 2020, there has been a boom in AI, leading to mass layoffs(the role of the COVID-19 pandemic in this process should be noted), as well as the use of AI for filtering resumes and automating HR processes for recruitment.

I'm not even talking about the wage gap between companies like Google, Apple, Microsoft, Meta, Amazon, Roblox, Palantir and other companies, as well as startups that cannot afford to pay such high salaries.

All these factors are leading to an inevitable crisis and collapse of the labor market based on the latest technologies.

Naturally, the middle class is being destroyed, and baby boomers are being actively pushed into retirement, and the gap between the small percentage of wealthy people and the majority of the lumpen proletariat, who lived paycheck to paycheck, is becoming enormous, which is fraught with social upheavals, wars, revolutions, and naturally, mass genocide of the population of planet Earth.

Shares of Japanese toilet maker Toto gained the most in five years after booming memory demand excited expectations of growth in its little-known chipmaking materials operations.

Shares of Japanese toilet maker Toto gained the most in five years after booming memory demand excited expectations of growth in its little-known chipmaking materials operations.The stock surged as much as 11%, its steepest rise since February 2021, after Goldman Sachs analysts said Toto’s electrostatic chucks used in NAND chipmaking will likely benefit from an artificial intelligence infrastructure buildout that’s tightening supplies of both high-end and commodity memory.

Analysts Sachiko Okada and Sayako Tominaga raised their rating for Toto to buy from neutral, citing hopes for "significant profit growth” from the firm’s chuck-making business. The memory industry’s tight supply-demand environment will be a tailwind, they said. Toto expects AI data center construction to continue to raise demand for its electrostatic chucks, a representative said.

Known for its heated toilet seats, the maker of "washlet" cleansing toilets has for decades been part of the semiconductor and display supply chain via its advanced ceramic parts and films. Its electrostatic chucks — which it began mass producing in 1988 — are used to hold silicon wafers in place during chipmaking while helping to control temperature and contamination, according to the company. The company’s new domain business accounted for 42% of its total operating income in the fiscal year ended March 2025, Bloomberg-compiled data shows.

The likes of Meta Platforms and Amazon.com are investing hundreds of billions of dollars into data centers for AI services, triggering widespread shortages of semiconductors. That’s spurring memory makers around the world to ramp up production, from SK Hynix to Samsung Electronics and Kioxia Holdings, and lifting demand for products such as Toto’s.

Fine ceramics are similar to the sanitary ceramics used in Toto’s toilets, but with strength comparable to metals. Ceramic is lighter than metal and able to resist higher temperatures without interfering electrically with chipmaking tools, but are more brittle and costly.

Japan’s long history in chip production has led many companies, including unlikely names like consumer product manufacturers, to build out semiconductor-related operations. MSG seasoning inventor Ajinomoto produces chip insulating films — a business that arose from its command of amino acids. Cosmetics firm Kao, which sells facial cleansers, also has a chip wafer cleaning business.

Thursday’s gains helped make ceramics the Topix’s best-performing sector in the afternoon in Tokyo. The toilet firm’s rise came amid a broad rally in AI shares, with OpenAI investor SoftBank and chip tool maker Disco climbing over 10%.